An Architectural Perspective on Consumer AR Displays

Since leaving Meta, I’ve spent time exploring topics that interest me—though not always immediately useful. One subject I haven’t previously devoted much attention to is augmented reality display architecture. After many years of building and evaluating these systems, I’ve found value in stepping back and observing how the field continues to evolve.

Recently, I began reflecting again on several questions about AR systems, including the architectural challenges of AR displays. This document captures some of those thoughts (based on material I prepared for a talk at the Optical Imaging Science Congress in August 2025). It is not intended to be comprehensive and is largely my opinion. Instead, it offers an architectural perspective on the constraints that define the problem and a framework for thinking about how meaningful progress might occur.

The Three Axes of Viability

Any discussion of AR displays has to begin with AR glasses as a system.

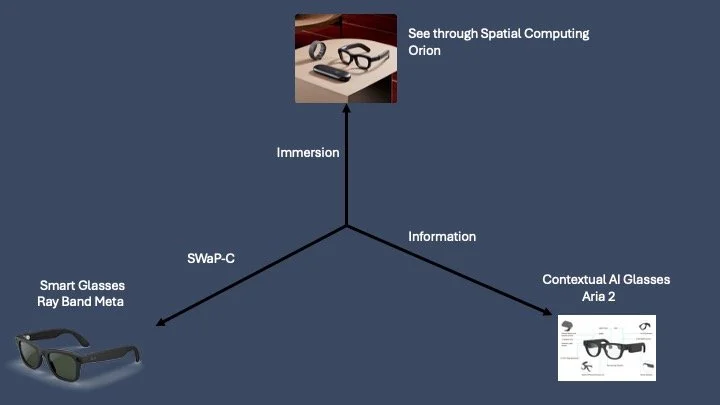

Bernard Kress has often described mixed reality as a continuum between “smart glasses” and “spatial computing.” I think that framing is directionally correct, but incomplete. There is a third axis that matters just as much: contextual AI.

Together, these form what I think of as the three axes of viability (see Figure 1). Figure 1 identifies these three axes and I’ve given examples of what might be considered a state of the art example that exemplifies each axes based on offerings from Meta. I’ve used Meta not because I think these are the best examples but rather because they are ones I’m most familiar with.

The first axis—smart glasses—is fundamentally about SWaP-C: size, weight, power, and cost. This term comes from aerospace, but it applies perfectly to consumer eyewear. If the device is too heavy, too bulky, too short-lived, or too expensive, nothing else matters. Comfort and wearability are gating constraints.

The second axis—often called spatial computing—is better described as immersion. Immersion reflects field of view, brightness, resolution, optical efficiency, image quality, and overall visual integration with the real world. A system can be lightweight and affordable, but if it fails to deliver a compelling visual experience, it will not create sustained value.

The third axis is contextual AI. This refers to the system’s ability to sense and interpret the world from an egocentric perspective. RGB cameras, depth sensing, gaze tracking, inertial sensors, microphones, and other inputs feed this stack. Contextual AI determines whether the system is merely a display—or an intelligent companion.

A viable consumer AR system must cross a threshold on all three axes at the same time. That simultaneity is the hard part.

Figure 1: Three Axes of Viability for a Consumer AR System

The Problem Is Conjunction, Not Optimization

AR is often discussed in terms of improving a specific component: brighter displays, better waveguides, more efficient processors, improved depth sensing, and so on.

But the architectural difficulty isn’t maximizing any single parameter. The difficulty is satisfying all of them simultaneously. The operative word in AR system design is “and.” The system must meet weight limits and deliver sufficient brightness and provide meaningful field of view and run advanced perception algorithms and remain thermally comfortable and hit cost targets. Every variable interacts with every other variable.

When a new breakthrough appears, my first instinct is always to ask: what’s missing?

· A smaller projector often reduces lumens per watt.

· A high-efficiency waveguide may increase thickness or introduce ghosting.

· A new material may enable new geometries but introduce instability or optical inhomogeneity.

Nothing moves independently.

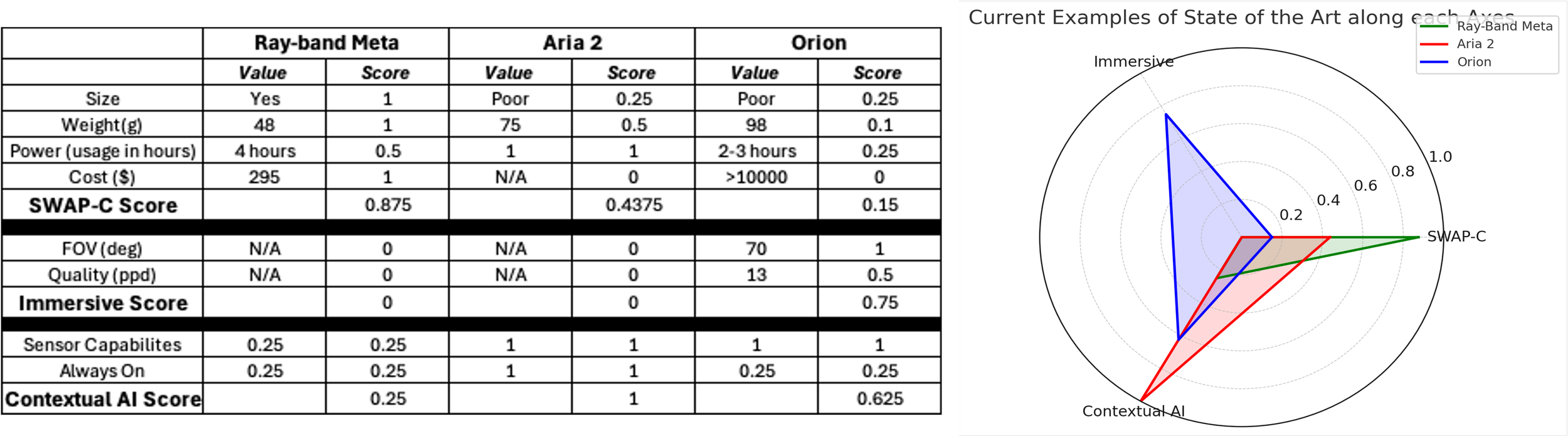

To illustrate this, I’ve taken the three example offerings from Meta and created a scoring along the three axes to illustrate how optimizing on one axes necessarily means sacrificing on another. A spider diagram illustrating this is shown in figure 2. On the left of figure 2, I’ve presented the simple analysis I’ve used to create the assessment. Of course, this is only quasi analytical on my part and a serious analysis should be done by an orthogonal team with preset criteria but the net conclusion of my simple analysis illustrates my point.

Figure 2: Left - Simplified analysis of three state of the art AR systems. Right - Spider diagram comparison of the three systems on the three axes of viability

A Simple Thought Experiment

The goal of this section is to build intuition for the magnitude of the challenge. Building intuition on what is possible is easier said than done. Discussion around “next-generation” AR displays is often clouded by hype and competing objectives, making it difficult to separate architectural reality from optimistic extrapolation. Models, simulations, experiments, and publications are all useful. But they can also mislead—particularly when they are framed to support a specific narrative rather than to provide a balanced assessment.

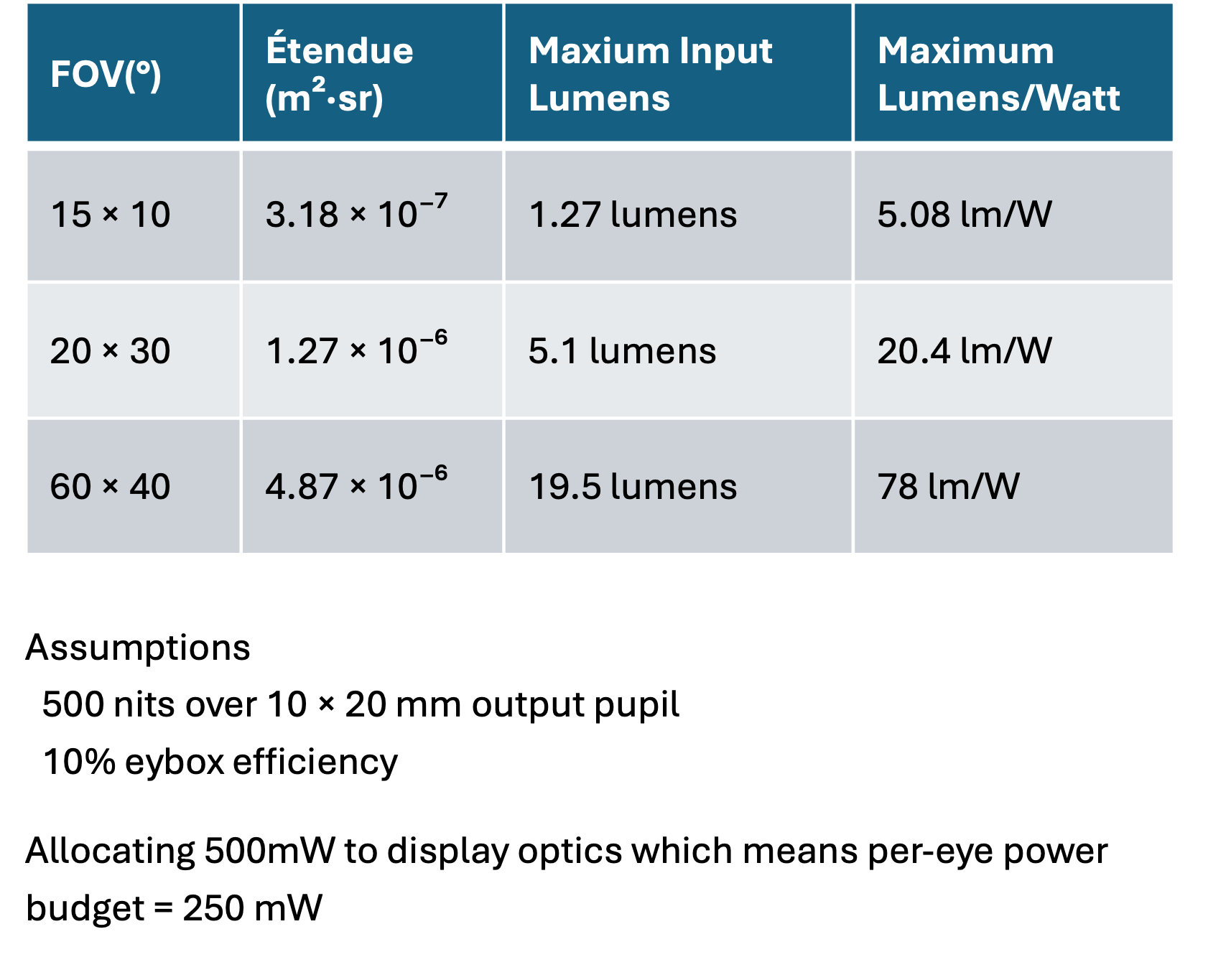

Over the years I’ve found it important to consider several factors: recent experimental results and modeling claims, alongside tougher benchmarks grounded in shipping products and historical performance limits. With that context, let’s run a simple first-order analysis to estimate the lumens-per-watt required for a viable AR display. These estimates are rough and focus on a single parameter, but the goal is to illustrate the strong interactions among multiple variables.

Start with weight.

The average weight of eyeglass frames is roughly 25 grams. Ray-Ban Meta glasses weigh around 50 grams and already sit near the upper boundary of what many users consider acceptable for extended wear.

If we assume that 50 grams is the practical target for all-day consumer AR glasses—based on the demonstrated acceptance of Ray-Ban Meta—that leaves 25 grams beyond the frame for everything else: battery, compute, memory, sensors, optics, and display.

Now divide that remaining mass.

For simplicity and based on experience, I’ll allocate 12.5 grams to the battery and 12.5 grams to the optics (including prescription lens, combiner, and waveguide). This split immediately imposes significant constraints on both available power and the feasibility of creating a viable display.

A 12.5-gram battery imposes a tight power budget. Using reasonable assumptions about battery performance and typical usage, you can estimate roughly 1.5 W of average system power to achieve an all-day wearable run time of about eight hours. If compute and sensing consume roughly two-thirds of that power, the display is left with approximately 0.5 W.

Figure 3 presents simple, first-order calculations of the lumens-per-watt required under these assumptions. As expected, the required efficiency rises rapidly as field of view expands. This scaling is nonlinear; although this is well known, seeing the numbers helps internalize how dramatically the challenge increases with larger fields of view.

Figure 3: An example Lumens/Watt calculations.

From my experience, achieving roughly five lumens per watt within the volume constraints of an eyewear-scale AR projector is feasible, but it sits at—or slightly beyond—the current state of the art. Higher efficiency figures are sometimes reported for various light-engine architectures, and I have seen well-constructed models suggesting substantially greater lumens per watt. In most cases, however, those projections depend on optimistic assumptions or require geometries that are incompatible with true eyewear form factors.

There are, of course, highly efficient consumer projection systems—pico projectors and home-theater projectors, for example—but those systems operate under far less stringent geometric constraints. Efficiency and miniaturization are widely understood to be inversely correlated: as the light engine is reduced to fit within eyewear dimensions, maintaining high optical efficiency becomes markedly more difficult.

This thought experiment does not prove the problem unsolvable, but it does clarify the scale of the challenge.

Recently, CREOL (Qian et al., 2025) reviewed microLED and laser beam–scanning approaches and estimated that roughly three lumens of monochrome output may be achievable within tight power budgets. That analysis, together with recent product introductions from companies such as Meta and Rokid offering smart glasses with small field‑of‑view displays, indicates that limited-display implementations are becoming technically feasible. However, it remains too early to determine whether these systems meaningfully address the underlying architectural trade‑offs. As with many new product launches, considerable hype surrounds these releases, and any firm conclusions about their long‑term viability—or their potential to scale to fully immersive AR within true eyewear constraints—would be premature.

A perspective on how to make progress

If incremental optimization is insufficient to solve the architectural challenge, the question becomes how to think about real progress. When I evaluate whether a field is likely to make a meaningful leap, I find it useful to categorize potential advances into three distinct but related approaches: technologies, modalities, and assumptions. These three approaches represent fundamentally different mechanisms for expanding the design space.

Defining the three approaches

Technologies refer to new components, materials, fabrication methods, or computational tools that improve performance within an existing architectural framework. A technological breakthrough typically enhances known system parameters such as brightness, efficiency, size, or speed. It advances performance by improving the building blocks.

Modalities denote new forms of information or operational frameworks that enable fundamentally different platforms and ways of sensing the world. A new modality does more than upgrade a component: it changes the types of data a system can access and, in doing so, reshapes the entire solution space. Often, a new modality drives improvements across multiple domains by enabling new architectures rather than by delivering a single, discrete solution.

Assumptions are the deepest layer. These are the foundational constraints that the field often accepts as given. Challenging an assumption does not optimize the architecture; it redefines it. If the correct assumption is overturned, the design space can change dramatically.

Each approach contributes to advancing augmented reality, operating at different depths within the system architecture. The next sections present one example for each level: a historical technological milestone, a current exploration in modalities, and an emerging shift in underlying assumptions. These examples are illustrative, intended to build intuition about each category.

Technologies: Moving Beyond the State of the Art

Technological advancement is the most familiar path forward. The question here is straightforward: what emerging technologies could allow us to move meaningfully beyond the current state of the art?

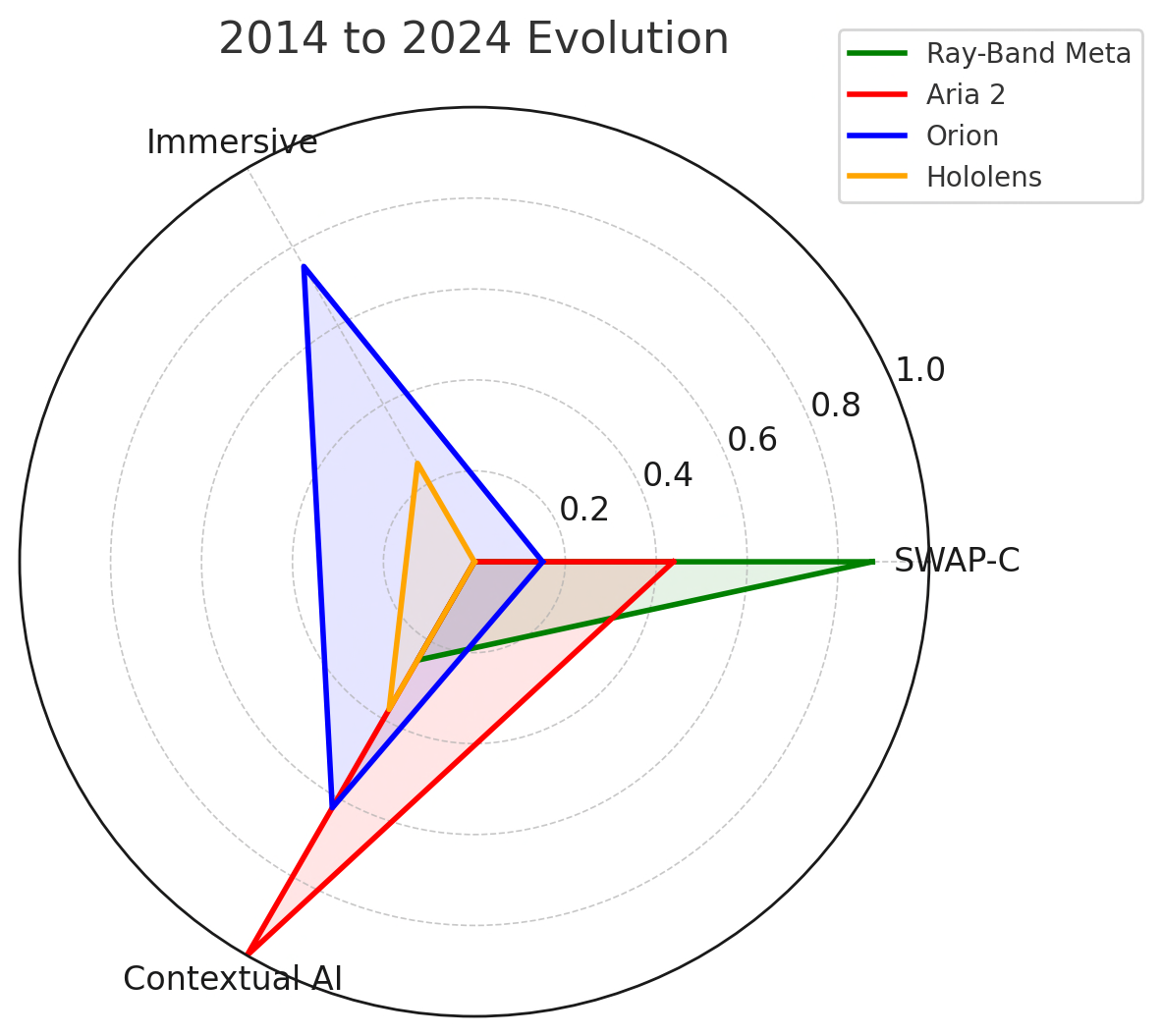

Approximately a decade ago, when immersive AR research was accelerating, a central question was, “What would it take to get beyond state-of-the-art as fast as possible?” At that time, the benchmarks included systems such as HoloLens V1 and early Magic Leap devices. These systems demonstrated feasibility but were far from consumer-viable eyewear. The task we were challenged was to move way beyond state of the art and create a vision for what could be possible.

To create a meaningful advancement (specifically in AR display), it was necessary to identify technologies that could fundamentally improve immersion while maintaining a path toward glasses form factor.

Several key technological pillars emerged:

High-brightness emissive microLED displays capable of extremely high lumens within a small footprint.

High-index substrates, such as silicon carbide, that allow larger field of view within a single waveguide substrate.

Advanced nanofabrication techniques to implement precise diffractive structures with minimal see-through artifacts.

Rigorous computational infrastructure for waveguide design and optimization.

The combination of microLEDs and silicon carbide (SiC) substrates greatly expanded the design space. MicroLEDs’ high brightness enabled a large field of view in a very compact form factor. SiC’s high refractive index produced greater angular expansion in a thinner substrate, improving immersion while retaining a glasses-like geometry. It also reduced see-through artifacts, such as so called rainbow artifacts. To illustrate the gains on the key metrics discussed earlier, Figure 4 adds HoloLens 1 to the spider diagram for comparison with Orion.

Figure 4: Spider diagram showing the addition of Hololens V1 when compared to the current state of the art.

When compared to earlier systems, the improvements were substantial. The field of view expanded dramatically, and the overall form factor was significantly improved. This is not to say that Orion is a viable product: there are still many issues on all three axes. Additionally, it took a significant investment of both time and money to reach what is only a prototype and is only partway to a viable solution. Using Orion as an example demonstrates how aggressively creating and implementing emerging technologies can produce significant advancements.

Modalities: Changing the Information Available to the System

Modalities operate differently. Instead of improving a component, they introduce new forms of information that reshape how the system can reason about the world.

Depth sensing provides a historical example. The introduction of consumer-viable depth sensors fundamentally changed imaging pipelines. Once depth became available alongside RGB, entirely new classes of algorithms and applications became possible.

In the context of AR and contextual AI, polarization imaging is a promising new modality. In some respects, polarization today resembles depth sensing before the launch of Kinect: the underlying technology is proven and demonstrations indicate it can significantly improve performance, yet no polarization sensor currently meets the size, weight, power, and cost constraints required for consumer devices.

Polarization imaging has existed in scientific communities for decades, but only recently have compact linear Stokes cameras become sufficiently practical for broader experimentation. Polarization adds dimensions of information beyond intensity, including degree of linear polarization and angle of linear polarization. These parameters encode material and surface properties that are invisible to conventional RGB imaging. Figure 5 shows an example comparing an intensity image with a degree of linear polarization image:

Figure 5: Comparison of intensity image (left) and degree of linear polarization image (right)

In experimental work, polarization has demonstrated several potential benefits relevant to AR systems:

Improved estimation of material properties when surfaces contain both diffuse and specular components. (Baek et. al 2020)

Enhanced robustness in gaze tracking, particularly under real-world conditions such as squinting or partial occlusion. (Zurauskas et. al 2024)

The potential to combine sparse depth sensing with polarization cues to reconstruct geometry with lower overall sensing burden. (Kadambi et. al 2015)

Improved handling of transmissive or transparent objects, which are notoriously difficult for traditional depth systems. (Kalra et. al. 2020)

The key architectural question is whether polarization sensing could allow improved contextual AI performance while reducing power, computation, or sensor redundancy. The academic work is promising but too early to say if it will have a meaningful impact on a real product.

Much of the success will depend on the viability of consumer-grade polarization sensors. Prototype wafer-level and metalens-based approaches suggest that integration into compact sensors may become feasible in the coming years. Figure 6 is an example of an early prototype sensor compared with a state of the art commercial polarization sensor to highlight the what might be possible in terms of creating polarization sensors at the right size. While an early prototype, if these could be realized with acceptable performance, polarization could represent a meaningful shift along the contextual AI axis without dramatically worsening size, weight, or power.

Figure 6: On the left is a prototype consumer polarization camera and on the right is a commercially available polarization camera to demonstrate the potential size reduction.

Assumptions: Can we reshape our belief

The deepest and potentially most powerful approach involves challenging core assumptions.

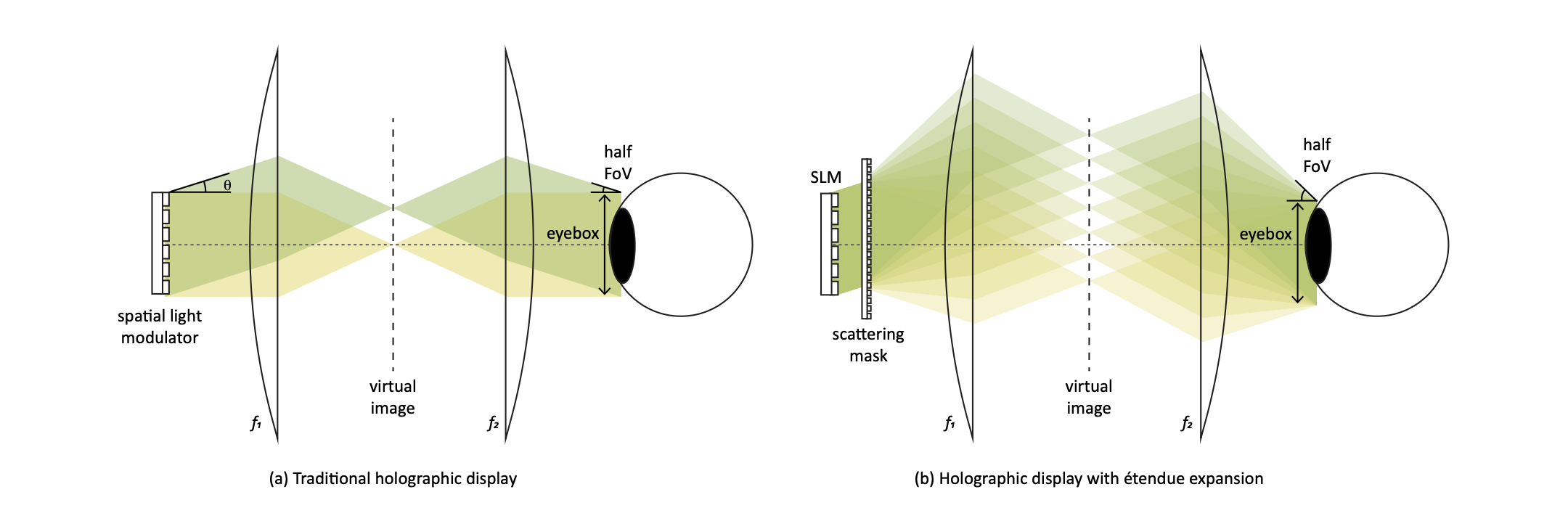

In waveguide-based AR systems, a common implicit assumption is that the projector must map collinearly to the eye box. In conventional architectures, each point on the microdisplay corresponds to a specific field angle at the output pupil. This collinearity constrains light propagation within the waveguide and imposes strict requirements on preserving angular information throughout the entire optical pipeline.

This assumption has profound consequences. It drives the need for high refractive index materials to achieve large fields of view. It contributes to inefficiencies in light extraction. It complicates vergence-accommodation solutions. It reinforces the flat waveguide paradigm.

Emerging research suggests that this assumption may not be fundamental.

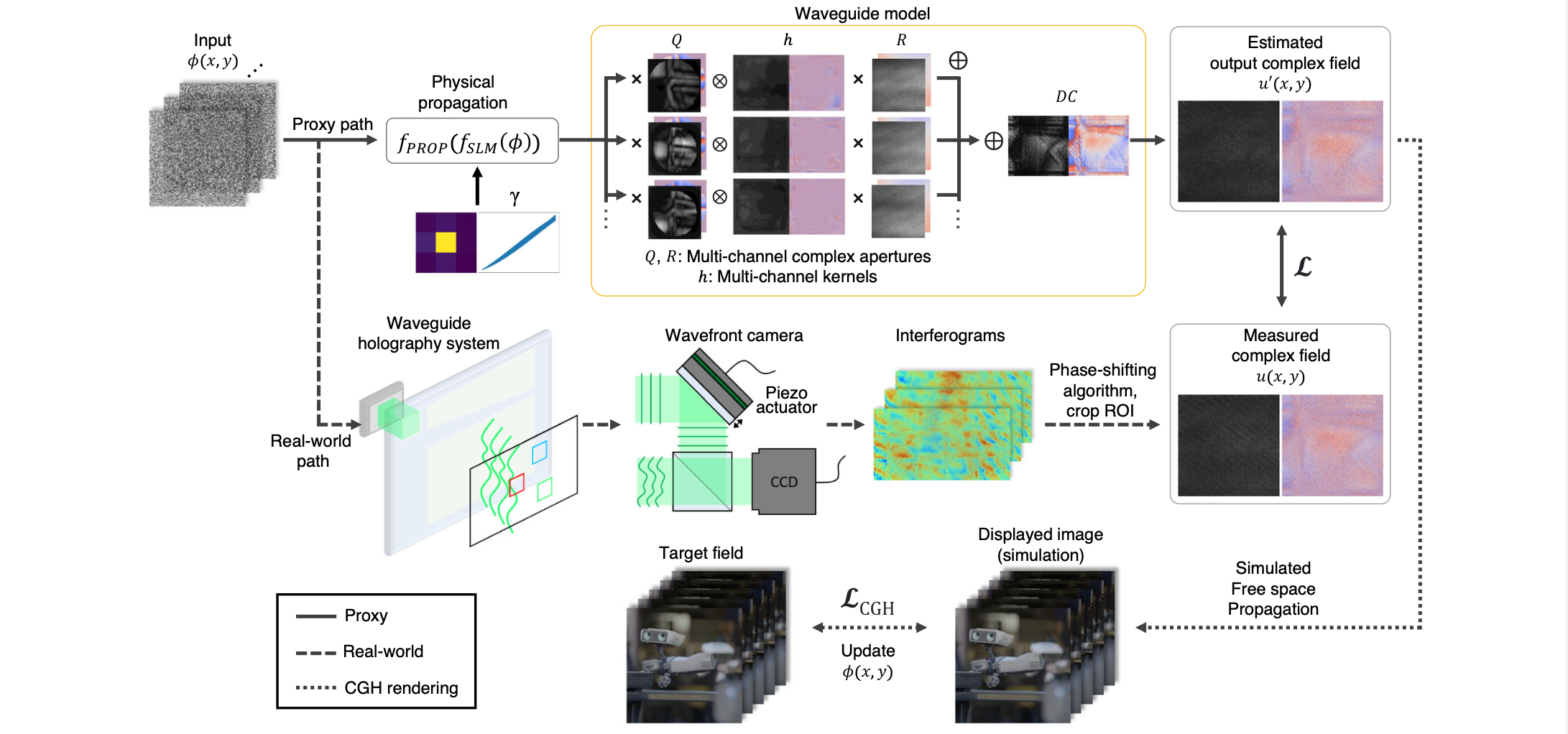

For example, étendue expansion using binary diffusers demonstrated that it is possible to expand angular content while sacrificing strict collinearity (Kuo et al 2020). Subsequent work using trained metasurfaces further improved expansion ratios and image quality(Seng et al 2024). More broadly, diffractive optical neural networks demonstrate that a sequence of trained diffractive layers can implement complex linear transformations between input and output optical fields (Isil et al 2022).

Figure 7: Etendue Expansion concept (Kuo 2020)

Waveguide holography extends this idea into a waveguide context (Jang et al 2024). Although current demonstrations are limited in field of view and remain experimental, they illustrate that non-collinear mapping within a waveguide is physically achievable.

Figure 8: Waveguide Holography concept (Jang 2024)

If collinear mapping can be relaxed or replaced with a learned transformation that preserves perceptual quality while improving étendue efficiency, several long-standing challenges may shift:

Waveguide efficiency may improve.

Index requirements for large field of view may decrease.

Form factor constraints may relax.

Novel solutions to vergence-accommodation conflict may become feasible.

At present, these approaches are academically interesting rather than consumer-ready. However, they illustrate the level of architectural rethinking that may be required to resolve the three-axis convergence problem.

Some final thoughts

Today, we can build smart glasses that meet SWaP-C constraints when no display is required. Adoption has been slow, but emerging trends indicate real commercial utility beyond technical novelty. We can also create immersive prototypes that deliver compelling digital experiences, but they remain too expensive and their size, weight, power consumption, and cost are unacceptable for all-day use. Although some prototypes offer impressive field of view, overall display quality still needs substantial improvement. Similarly, powerful contextual AI sensing platforms exist, but they are largely research systems: they lack integrated digital experiences and fall short on SWaP-C.

What we do not yet know is how to combine all three elements—SWaP-C-compliant hardware, high-quality displays, and contextual AI—into a single, consumer-viable, all-day wearable. My aim in publishing this is not to advocate a particular technology or solution, but to frame the problem and suggest different approaches for making meaningful progress. Specifically, I want to motivate a broader perspective that places the overall system architecture before individual component choices.